The Quantum Frontier (Part 2): The 'Crypto-Apocalypse': Why Shor's Algorithm Makes Your Data Obsolete

By Ryan Wentzel

10 Min. Read#Quantum#AI#RSA#Encryption#Shor's Algorithm

Table of Contents

- The Mathematical Wall Protecting Your Data

- Peter Shor's Quantum "Cheat Code"

- How Shor's Algorithm Actually Works (The Concept)

- The Threat is Now: 'Harvest Now, Decrypt Later'

- Conclusion

The Mathematical Wall Protecting Your Data

Modern security relies on the hardness of prime factorization (RSA) and discrete logs (ECC). Classical computers cannot feasibly reverse these operations at large key sizes. But to understand why Shor's algorithm is so dangerous, you first need to understand exactly what these cryptographic walls are made of and why we trusted them in the first place.

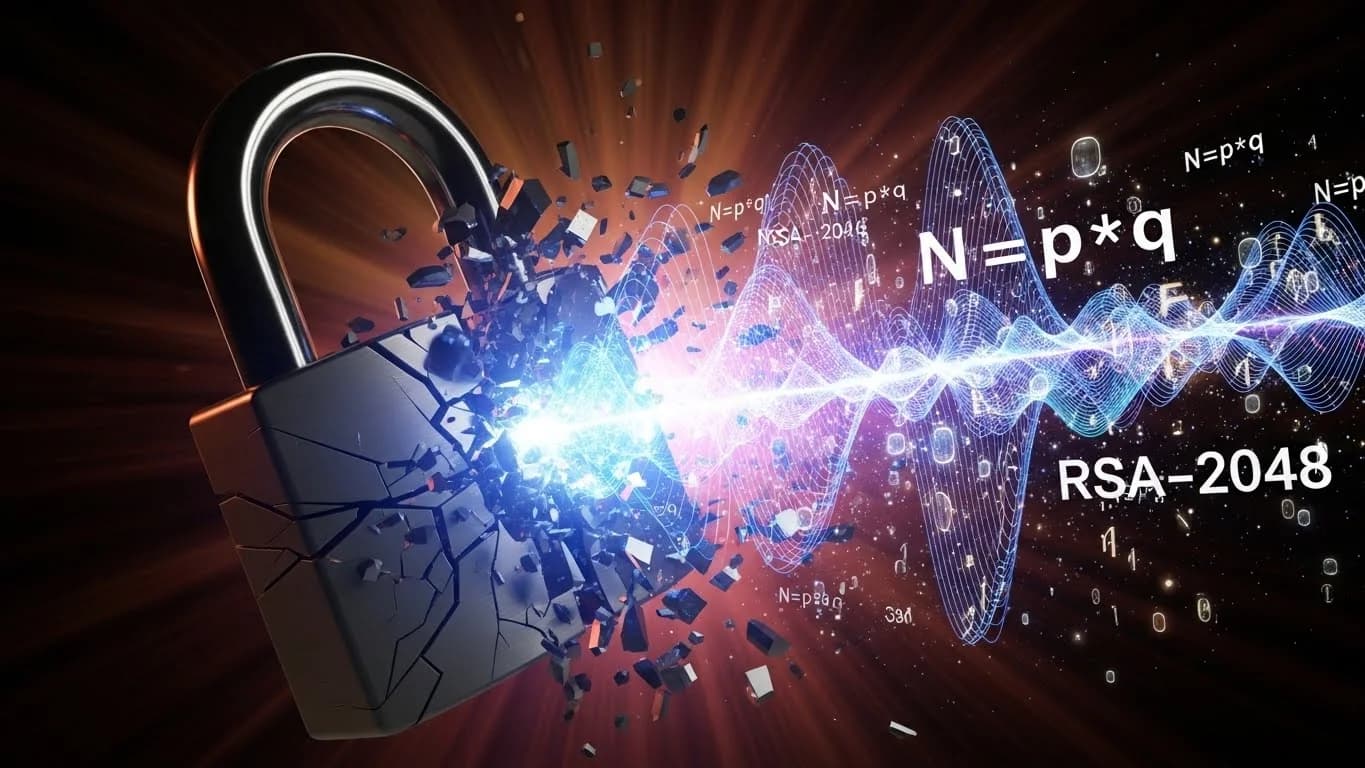

RSA: The Prime Factorization Trapdoor

RSA, named after its inventors Rivest, Shamir, and Adleman, is the most widely deployed public-key cryptosystem in history. Its security rests on a simple asymmetry: multiplying two large prime numbers together is trivially fast, but factoring the product back into its prime components is extraordinarily slow.

Here is how RSA key generation works at a high level. You select two large prime numbers, p and q, each typically 1,024 bits long. You multiply them to produce n = p _ q, a 2,048-bit number. You compute a related value called the totient, phi(n) = (p-1)(q-1), and select a public exponent e (commonly 65,537). Finally, you compute the private exponent d such that e _ d = 1 mod phi(n). The public key is the pair (n, e). The private key is d.

The trapdoor is this: anyone who knows p and q can trivially compute d from e. But anyone who only knows n (the product) must factor it to recover p and q, and factoring a 2,048-bit number is believed to be computationally infeasible for classical computers. The best known classical factoring algorithm, the General Number Field Sieve, has sub-exponential but super-polynomial complexity. For a 2,048-bit RSA modulus, this translates to an estimated computational effort requiring billions of years on the fastest classical supercomputers.

This is not merely a large number. It is a number so large that it exceeds the operational lifetime of our solar system. That is the wall.

ECC: The Discrete Logarithm Problem

Elliptic Curve Cryptography provides equivalent security to RSA with dramatically smaller key sizes. A 256-bit ECC key offers roughly the same security as a 3,072-bit RSA key. ECC's security relies on a different mathematical problem: the Elliptic Curve Discrete Logarithm Problem (ECDLP).

Given two points on an elliptic curve, P and Q, where Q = k * P (meaning P has been added to itself k times using the curve's group operation), finding k from P and Q alone is computationally infeasible. The best classical attacks against ECDLP run in approximately the square root of the group order, making a 256-bit curve require roughly 2^128 operations to break, which is firmly in the "heat death of the universe" territory for classical machines.

ECC is everywhere: TLS 1.3 (which secures virtually all web traffic), Signal Protocol (end-to-end encrypted messaging), cryptocurrency wallets (Bitcoin and Ethereum both use the secp256k1 curve), and code signing. Its efficiency and small key sizes made it the natural successor to RSA for many applications.

Why We Trusted the Wall

For over four decades, these mathematical problems have withstood intense scrutiny from the global mathematics and computer science communities. The factoring problem and the discrete logarithm problem are among the most studied in all of mathematics. No one has found a polynomial-time classical algorithm for either. The collective confidence of the cryptographic community in these problems was not naive; it was earned through decades of failed attacks.

And then Peter Shor published a paper.

Peter Shor's Quantum "Cheat Code"

In 1994, mathematician Peter Shor, then at AT&T Bell Laboratories, published a paper that fundamentally changed the trajectory of both quantum computing and cryptography. The paper demonstrated that a quantum computer could factor integers and compute discrete logarithms in polynomial time, an exponential speedup over the best known classical algorithms.

The Context of the Discovery

To appreciate the significance, consider the state of quantum computing in 1994. The field was largely theoretical. No one had built a quantum computer with more than a handful of qubits. Many physicists doubted that practical quantum computation was even possible, given the extreme fragility of quantum states.

Shor's algorithm changed the conversation overnight. It provided the first compelling reason to build a quantum computer: the ability to break the cryptographic infrastructure that underpins the global economy. Suddenly, quantum computing had a killer application, and it was terrifying.

The result also catalyzed the field of quantum error correction (which we will explore in Part 6 of this series). If Shor's algorithm was to be a real threat, quantum computers needed to be large and reliable enough to execute it. That challenge drove an entire generation of research into fault-tolerant quantum computing.

What Shor's Algorithm Actually Breaks

Shor's algorithm efficiently solves two problems:

- Integer factorization: Given a composite number N, find its prime factors. This breaks RSA.

- Discrete logarithm: Given g, p, and g^x mod p, find x. A variant of the algorithm also solves the elliptic curve discrete logarithm problem. This breaks ECC, Diffie-Hellman key exchange, DSA, and ECDSA.

The implication is stark. Every public-key cryptosystem in widespread deployment today, RSA, ECC, Diffie-Hellman, DSA, and their elliptic curve variants, is vulnerable to a sufficiently powerful quantum computer running Shor's algorithm.

How Shor's Algorithm Actually Works (The Concept)

Shor's brilliance lay in recognizing that factoring could be reduced to a different problem, period-finding, and that quantum computers are exceptionally good at finding periods.

Step 1: Reducing Factoring to Period-Finding

Suppose you want to factor a composite number N. Shor showed that if you can find the period r of the function f(x) = a^x mod N for a randomly chosen a, then with high probability you can extract a non-trivial factor of N using the greatest common divisor: gcd(a^(r/2) - 1, N) or gcd(a^(r/2) + 1, N).

This reduction is itself classical. It relies on number theory results that were well known before Shor. The key quantum ingredient is finding the period r efficiently.

Step 2: Quantum Period-Finding via the Quantum Fourier Transform

Classically, finding the period of f(x) = a^x mod N requires evaluating the function for exponentially many values of x. A quantum computer, using superposition, can evaluate f(x) for all values of x simultaneously by preparing a superposition of all possible inputs and computing f in parallel across all of them.

After this parallel computation, the quantum register holds a superposition of all (x, f(x)) pairs. The next step is the quantum Fourier transform (QFT), a quantum analogue of the classical discrete Fourier transform. The QFT converts the periodic structure in the quantum state into a frequency-domain representation. When you measure the output of the QFT, you obtain a value that is closely related to a multiple of N/r. Through continued fractions or other classical post-processing, you can extract r from this measurement.

The QFT operates on a superposition of states, performing what would classically require exponentially many Fourier transform computations in a single coherent quantum operation. This is where the exponential speedup comes from.

Step 3: Polynomial vs. Exponential Complexity

The classical General Number Field Sieve factors an n-bit integer in time approximately exp(c _ n^(1/3) _ (log n)^(2/3)), which is sub-exponential but still intractable for large n. Shor's algorithm runs in O(n^3) time (with some logarithmic factors), which is polynomial. The difference is not incremental; it is a qualitative change in computational complexity class.

For a 2,048-bit RSA key: the classical approach requires on the order of 2^112 operations. Shor's algorithm requires on the order of 2^33 operations (roughly 8 billion), which is trivially achievable for a computer that can execute them.

Current Hardware Status and Timeline

The critical qualifier is "sufficiently powerful quantum computer." Running Shor's algorithm to factor a 2,048-bit RSA key requires an estimated 4,000 to 10,000 logical (error-corrected) qubits, depending on the implementation and the error correction overhead. Given current error rates, this translates to roughly 10 to 20 million physical qubits.

As of early 2025, the largest quantum processors have around 1,000 to 1,200 physical qubits (IBM's Condor, Atom Computing's neutral atom systems), with gate fidelities that are still insufficient for running Shor's algorithm at cryptographically relevant scales. However, the trajectory is clear:

- IBM's roadmap targets 100,000+ qubits by 2033, with modular architectures connecting multiple processors.

- Google demonstrated quantum error correction below the threshold with their Willow processor in late 2024, a critical milestone for scaling to logical qubits.

- Quantinuum has achieved the highest reported two-qubit gate fidelities (99.8%+) with trapped-ion hardware.

- PsiQuantum is building a million-qubit photonic quantum computer with a target of late this decade.

Estimates for when a cryptographically relevant quantum computer (CRQC) will exist range from the early 2030s to the 2040s. The consensus view among experts, as reflected in reports from RAND Corporation, the Global Risk Institute, and national security agencies, places a non-trivial probability (15-30%) on a CRQC existing by 2035.

The Threat is Now: 'Harvest Now, Decrypt Later'

If a CRQC is still years away, why should organizations act now? The answer is a threat model that security professionals call "Harvest Now, Decrypt Later" (HNDL), sometimes also referred to as "Store Now, Decrypt Later" (SNDL).

The HNDL Attack Model

The logic is straightforward and deeply unsettling. An adversary intercepts and stores encrypted communications today. The data remains encrypted and unreadable. Years later, when a CRQC becomes available, the adversary decrypts the stored data using Shor's algorithm.

This is not a hypothetical attack. It is a rational strategy for any sophisticated adversary, and there is strong evidence that nation-state intelligence agencies are already executing it. In 2022, the U.S. National Security Agency issued guidance stating that the threat was real enough to warrant immediate action. The White House issued National Security Memorandum 10 (NSM-10) requiring federal agencies to begin inventorying their cryptographic systems and planning for PQC migration.

Data Shelf-Life: Why It Matters Now

The urgency of the HNDL threat depends on the intersection of two timelines: how long your data needs to remain confidential, and how long until a CRQC exists.

Consider these examples:

- Government classified information: Often has a secrecy requirement of 25-75 years. Data classified today must remain secure through at least 2050-2100. If a CRQC arrives by 2035, that data is vulnerable.

- Healthcare records: HIPAA requires protection for the lifetime of the patient plus some period. A medical record created for a 30-year-old today may need protection until 2090 or beyond.

- Financial records: Trade secrets, merger and acquisition plans, and proprietary financial models may need protection for 10-20 years.

- Intellectual property: Patent strategies, R&D data, and product designs often need protection for the life of the product cycle or longer.

- Personal communications: Attorney-client privilege, journalist-source communications, and personal correspondence may need indefinite protection.

If the sum of "time to migrate to PQC" plus "remaining confidentiality requirement" exceeds the "time until CRQC," then the data is at risk under the HNDL model. For many organizations, that equation already yields a negative result.

Nation-State Intelligence Implications

The HNDL threat is particularly acute in the context of nation-state espionage. Intelligence agencies routinely intercept and store vast quantities of encrypted communications. The NSA's data centers, China's surveillance infrastructure, and similar facilities in Russia, Israel, and other nations have the storage capacity to archive petabytes of encrypted traffic.

For these actors, the cost of storage is negligible compared to the potential intelligence value of decrypting diplomatic cables, military communications, intelligence reports, and trade negotiation strategies. The incentive to harvest now is overwhelming, and the only question is when the decryption capability arrives.

This is not a future problem. The harvesting is happening today, on backbone fiber optic cables, at internet exchange points, and through compromised network infrastructure. The Snowden revelations in 2013 documented programs like UPSTREAM and PRISM that intercepted vast quantities of encrypted internet traffic. While those programs targeted metadata and unencrypted content, the infrastructure for bulk collection of encrypted data is well established. Every day that passes with data protected only by RSA or ECC is another day of exposure to the HNDL threat. The window for action is not defined by when quantum computers arrive; it is defined by how long your data must remain secret after they do.

Conclusion

Shor's algorithm turns our "impossible" mathematical problem into a tractable one for quantum machines. The wall that has protected our digital economy for over four decades, the computational hardness of integer factorization and discrete logarithms, will crumble under a sufficiently powerful quantum computer.

The timeline is uncertain, but the direction is not. Quantum hardware is advancing steadily. Error correction is improving. And adversaries are already harvesting encrypted data in anticipation.

The clock is ticking. The question is not whether quantum computers will break current cryptography, but when, and whether your organization will have migrated to quantum-resistant alternatives before that day arrives.

In Part 3 of this series, we will explore exactly those alternatives: the NIST Post-Quantum Cryptography standards and the engineering challenge of migrating the world's cryptographic infrastructure before it is too late.

About Ryan Wentzel

Ryan previously served as a PCI Professional Forensic Investigator (PFI) of record for 3 of the top 10 largest data breaches in history. With over two decades of experience in cybersecurity, digital forensics, and executive leadership, he has served Fortune 500 companies and government agencies worldwide.