Taming the LLM: Using Finite-State Machines to Prevent Hallucinations in AI Workflows

By Ryan Wentzel

6 Min. Read#AI & Technology#AI#generative-AI#machine-learning#LLM

Table of Contents

- Introduction

- The Problem: The "Naked LLM" Anti-Pattern

- Enter the Finite-State Machine

- Architecting an FSM-Driven Document Platform

- Why This Drastically Improves Quality

- When Not to Reach for an FSM

- Conclusion

Introduction

If you are building an AI-powered chat or document generation platform, you already know the bottleneck standing between your prototype and production: hallucinations.

Large Language Models are, at their core, probabilistic next-token predictors. Left to their own devices inside an open-ended chat loop, they are inherently non-deterministic. Ask one model to simultaneously act as a friendly conversationalist, a rigorous fact-finder, and a precise document drafter, and it will blend those contexts and start inventing things—citations, clauses, customer names, API endpoints that have never existed.

The fix is not a 2,000-word system prompt that tries to anticipate every edge case. The fix is architectural. By wrapping your LLM calls in a Finite-State Machine (FSM), you can transform a chaotic generative model into a predictable, inspectable software component.

Here is a technical breakdown of how to use FSMs to constrain AI behavior, mitigate hallucinations, and ship document workflows you can actually trust in front of paying customers.

The Problem: The "Naked LLM" Anti-Pattern

When developers first build AI features, they typically reach for a single long-running conversation thread. As the user interacts, the context window fills up with system instructions, user messages, retrieved documents, tool outputs, and prior model responses—all competing for the model's attention.

Two failure modes follow almost immediately:

Context confusion. The model loses track of its current objective. Is it brainstorming options, or is it strictly quoting a clause from the master services agreement? When everything lives in one prompt, the boundaries blur.

Prompt drift. The longer and more elaborate a system prompt becomes, the more likely the model is to quietly drop instructions—usually the constraints you cared about most. The negative rules ("never invent a citation," "never answer outside this scope") are exactly the ones that get sanded off as the conversation grows.

You cannot prompt your way out of this. You have to design your way out of it.

Enter the Finite-State Machine

A Finite-State Machine is a classical model of computation: a defined set of states, a defined set of transitions, and an initial state. The system exists in exactly one state at a time and moves between states based on deterministic inputs.

For AI engineering, the relevant flavor is the Deterministic Finite Automaton (DFA): every input maps to exactly one next state. This strips the LLM of its ability to "go off script," because the architecture dictates the workflow—not the model's generative whims.

The key components, mapped to an AI context:

- States are isolated micro-tasks, each with a tight, single-purpose system prompt:

INTAKE,EXTRACTION,RETRIEVAL,DRAFTING,VALIDATION,REVIEW. - Transitions are deterministic code—plain TypeScript or Python—that decides when to advance, based on explicit success criteria like "required JSON fields populated" or "validator returned no errors."

- Routing logic is the implementation layer: a switch statement, an enum-driven dispatcher, or the State design pattern. Whatever the shape, it guarantees the model only ever sees the prompt relevant to its current state.

The mental model shift: the LLM stops being the conductor of your application and becomes a stateless function you call from inside a stateful system.

Architecting an FSM-Driven Document Platform

Here is how this plays out in a realistic scenario—an AI platform that generates a complex legal or technical document from a user conversation.

Instead of handing the user to a single LLM and asking it to "write the document," you route them through a strict state machine.

State 1 — Intake

"You are an intake agent. Your only job is to ask the user questions until every field in this JSON schema is populated. Do not answer questions outside this scope. Do not draft the document."

The model converses with the user. After each turn, deterministic code validates the JSON schema against the conversation so far. When every required field is populated and type-checked, the FSM transitions. The model is never asked to decide whether intake is "done"—that decision belongs to code.

State 2 — Extraction and Retrieval

"Extract the key facts from the transcript below. Return them as a structured list. Do not infer, summarize, or add anything not explicitly stated."

This state is invisible to the user. It is also where Retrieval-Augmented Generation belongs: query a vector store for verified clauses, precedents, or product specs and assemble a structured context payload. The output of this state is data, not prose.

State 3 — Drafting

"You are a technical writer. Generate Section 1 using only the facts inside the

<CONTEXT>block. If a required fact is missing, emit[PLACEHOLDER: description]. Do not invent data."

The model is now operating inside a tight box. It is not reasoning about what the document should say—that was decided upstream. It is formatting known facts into prose. Hallucination surface area collapses, because there is nothing left to hallucinate about.

State 4 — Validation

A validator—rules-based, schema-based, or a separate LLM call with a critic prompt—checks the draft against the extracted facts. Any [PLACEHOLDER] token, missing citation, or unsupported claim sends the FSM back to an earlier state with a specific error. Otherwise, it advances to human review.

Each state does one thing. Each transition is a decision made in code. The user sees a smooth experience; under the hood, you have a workflow you can reason about.

Why This Drastically Improves Quality

Treating the LLM as a stateless function called from a stateful architecture pays off in four concrete ways.

Prompt isolation. Instead of one monolithic "god prompt" that fails in unpredictable ways, you have a handful of modular micro-prompts. When drafting goes wrong, you know exactly which prompt to edit—and you can edit it without fear of breaking intake.

Predictability. Application state lives in deterministic code. The LLM is relegated to text processing inside well-defined boundaries. Your workflow becomes something you can draw on a whiteboard and a new engineer can understand in an afternoon.

Debuggability and telemetry. Because every state transition is logged, traces become forensic. When a document comes out wrong, you do not stare at a 4,000-token conversation trying to guess what happened. You ask: did INTAKE fail to capture the field, did EXTRACTION drop it, or did DRAFTING ignore it? The answer is in the logs.

Cheaper evaluation. Each state can be evaluated in isolation with its own test set. You no longer need end-to-end evals for every prompt change—you need a focused eval for the state you touched. This is the difference between shipping AI features weekly and shipping them quarterly.

When Not to Reach for an FSM

FSMs are not free. They add structure, and structure has a cost. For genuinely open-ended, exploratory chat experiences—a brainstorming partner, a tutor, a coding copilot—a rigid state machine will feel like a straitjacket and frustrate users.

The heuristic: if your workflow has a defined output artifact and a definable notion of "correct," an FSM will pay for itself many times over. If the value of the product is the conversation itself, keep things loose and invest in guardrails of a different kind.

For agentic systems that need more flexibility than a strict DFA but more structure than free-form chat, look at graph-based orchestration frameworks like LangGraph or stateful workflow engines like Temporal. They are FSMs with the edges loosened—same philosophy, more give.

Conclusion

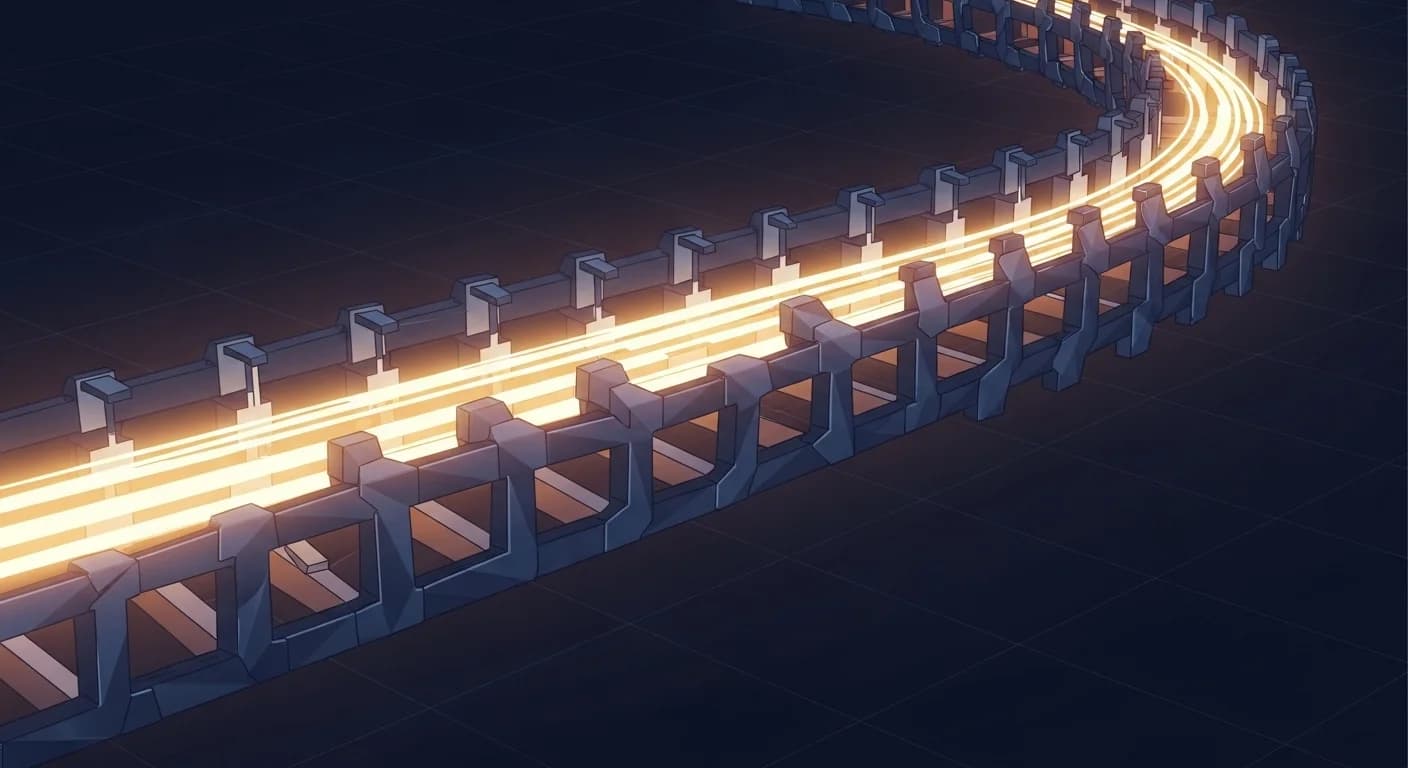

Building reliable AI platforms requires a mental shift from prompt engineering to systems engineering. Stop relying on the model to police itself. Build a deterministic track for it to run on, break complex cognitive work into safe and verifiable states, and let traditional code do what traditional code is good at: making decisions you can trust.

The model is not your application. It is a component inside it. Architect accordingly.

About Ryan Wentzel

Ryan previously served as a PCI Professional Forensic Investigator (PFI) of record for 3 of the top 10 largest data breaches in history. With over two decades of experience in cybersecurity, digital forensics, and executive leadership, he has served Fortune 500 companies and government agencies worldwide.